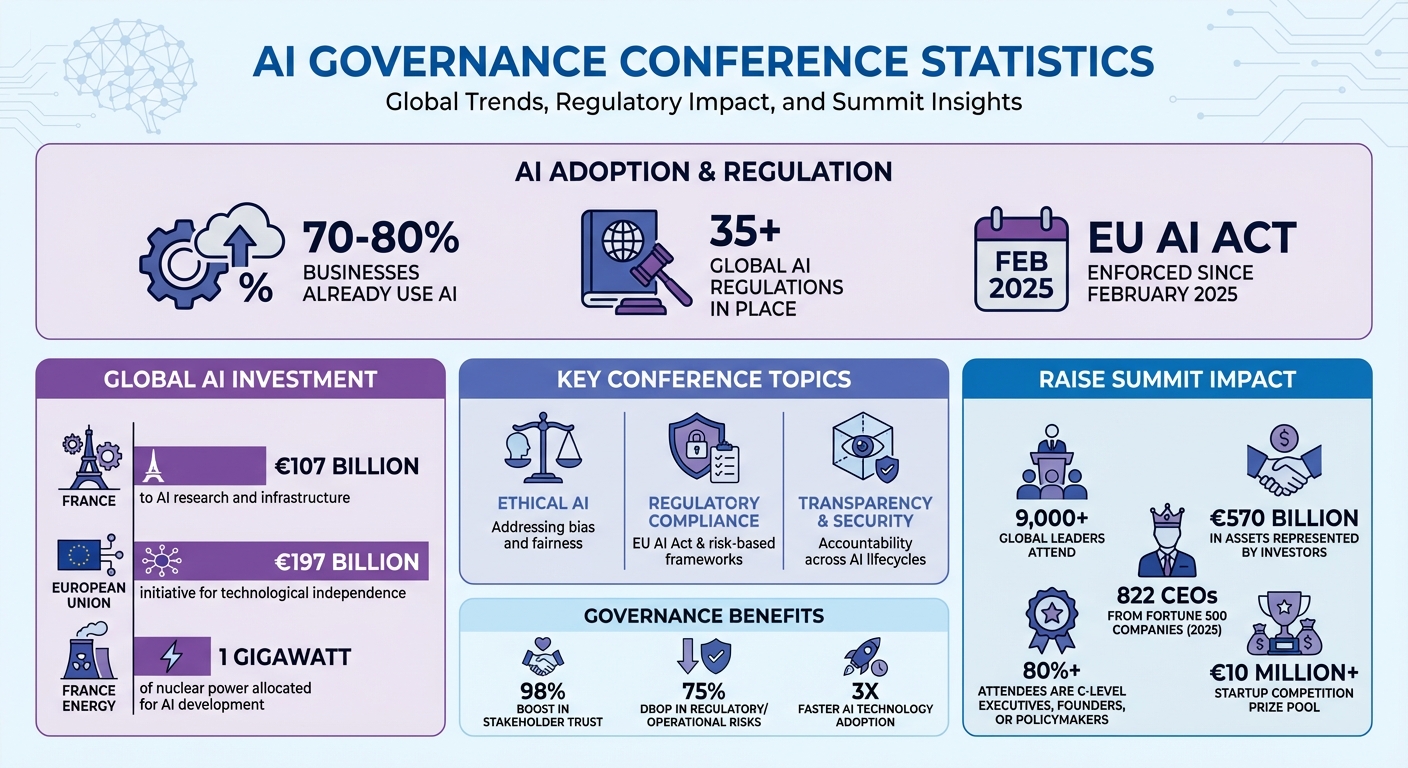

AI governance is reshaping conference agendas. Once focused on technical advancements, these events now prioritize discussions on responsible AI development, regulation, and ethics. Why? The rapid adoption of AI - already used by 70–80% of businesses - demands accountability. With laws like the EU AI Act enforced since February 2025 and over 35 global regulations in place, companies and governments are aligning strategies to balance innovation with risk.

Key topics dominating conferences include:

- Ethical AI: Addressing bias and fairness during development.

- Regulatory Compliance: Navigating frameworks like the EU AI Act and risk-based approaches.

- Transparency & Security: Ensuring accountability across AI lifecycles.

Events like the RAISE Summit in Paris exemplify this shift, offering structured tracks and actionable sessions to help businesses integrate governance into scalable AI systems. The focus is clear: responsible AI isn't just a legal requirement - it’s a business imperative.

AI Governance Conference Topics and Global Investment Statistics 2025-2026

Governing with Artificial Intelligence - 18 September 2025, 14:00 (CEST) | OECD, Paris, France

Main AI Governance Topics at Conferences

As AI governance gains traction, conferences are zooming in on specific themes that promote responsible AI use. Rather than just celebrating innovation, these events now tackle the challenges of scaling AI responsibly. Key topics like ethical AI, regulatory compliance, and transparency dominate discussions, addressing the hurdles that can hinder AI's move from pilot phases to widespread implementation.

Ethical AI and Algorithmic Bias

Conversations around fairness and bias-free AI design have become central. For example, back in February 2026, Dr. Fola Adeleke highlighted how critical decisions about AI fairness often occur during procurement and data selection. A single misstep here, such as a false positive, can lead to expensive investigations [7]. Reflecting this shift, the RAISE Summit introduced a session titled "Data Dilemmas: Navigating Privacy, Bias, and Quality in AI" as part of its "Friction" track, focusing on practical solutions rather than abstract debates [7].

Similarly, the 2026 Just AI Conference took a human rights-centered approach, organizing its agenda around themes like Democratic Futures and Digital Public Infrastructure. The message is clear: addressing bias early - during model design - is far more effective than reacting after deployment [8].

This ethical groundwork is essential for broader discussions on regulations and technical safeguards.

Regulatory Compliance and Standards

The debate between strict and flexible regulatory models is a hot topic at conferences. While the EU AI Act exemplifies a rigid approach, India advocates for a more adaptable, risk-based framework. Akshaya Suresh, a partner at JSA Advocates and Solicitors, explains:

"a principles-based, risk-harm-calibrated framework rather than a hard regulatory regime" [2].

This global contrast is shaping how organisations approach compliance. Some regions are even streamlining bureaucracy to stay competitive with AI powerhouses like the U.S. and China [4]. At the AI Action Summit 2025 in Paris, for instance, France pledged an impressive €107 billion to AI research and infrastructure, while the EU announced an even larger €197 billion initiative aimed at boosting technological independence [3][4]. France also allocated 1 gigawatt of nuclear power specifically for AI development, blending governance with infrastructure investment [4].

These moves show that governance isn't just about imposing rules; it's about creating a trusted foundation for AI growth.

Transparency, Accountability, and Security

The U.S. Department of Justice has updated its guidelines to include how companies ensure AI systems are trustworthy and accountable [12]. This shift has pushed conferences to focus on how governance measures are applied in real-world scenarios. Lee Dittmar from OCEG sums it up:

"Transparency in AI is foundational to building trust, ensuring accountability, and fostering a culture of ethical technology use" [12].

Sessions now address every stage of an AI system's lifecycle - data sourcing, model training, and post-deployment monitoring - to ensure accountability at all levels. Steve Holyer from Informatica underscores the importance of governance layers, stating:

"a governance layer must control how agents access and utilise data to prevent operational failure" [5].

This becomes even more crucial as AI systems evolve into autonomous entities capable of reasoning and planning. Beyond basic data protection, the focus now includes safeguarding against model destabilisation, a particularly pressing issue in military and defence applications [9][10]. To tackle these challenges, conferences are increasingly spotlighting "AI on AI" governance, where automated tools monitor other AI systems for bias, drift, and security issues [11][12].

These discussions underscore how AI governance is not just about mitigating risks but also about enabling sustainable and forward-thinking AI development.

RAISE Summit: AI Governance in Action

The RAISE Summit takes the principles of AI governance and puts them into practice through a structured and action-oriented agenda. It’s not just about discussing governance in theory; it’s about making it work in real-world AI applications. The event is built around the "4Fs" framework: Foundation, Frontier, Friction, and Future. Each track has a specific focus, with the "Friction" track zeroing in on challenges like compliance, safety protocols, public policy, and issues around privacy, bias, and data quality [1]. These sessions align with earlier conversations about ethical AI and regulatory measures, aiming to turn ambitious ideas into measurable outcomes [13]. Here’s a closer look at how the Summit transforms theory into action.

Governance Sessions at RAISE Summit

The Summit’s agenda is packed with sessions that highlight its hands-on approach to governance. For example, the 2025 edition featured a fireside chat with Clara Chappaz, France’s Minister Delegate for AI and Digital Affairs, focusing on national AI strategies. Another standout session, "Sovereign AI: The New Firewall", brought together leaders from Clarifai, Oracle, Credo, and Vaire Computing to discuss data sovereignty and security [1]. Karl Havard from Nscale outlined three must-haves for Sovereign AI: control, compliance, and growth.

Cybersecurity also took center stage, with Peter McKay from Snyk and representatives from Bank of America exploring the risks AI introduces to security frameworks. Meanwhile, Adrian Blair from TrustPilot shared insights on how companies can build consumer trust by being transparent in their AI practices [1].

Another key feature is the CxO Summit, an exclusive forum for Fortune 1000 executives. Held within the Louvre, this invite-only gathering provides a space to discuss AI strategies and tackle policy challenges. As Hadrien de Cournon, co-founder of RAISE Summit, puts it:

"The CxO Summit exists so companies don't just talk about AI, they leave RAISE with real partnerships, pilots, and signed deals" [16].

What Makes RAISE Summit Different

The RAISE Summit stands out not just for its content but also for its unique format. Hosted at the Carrousel du Louvre in Paris, the event draws over 9,000 global leaders, including a high concentration of C-level executives, founders, and policymakers - more than 80% of attendees fall into these categories [15][16]. The 2025 edition alone saw 822 CEOs from 168 Fortune 500 companies, and investors present represented over €570 billion in managed assets [16]. Eric Schmidt, former CEO of Google, described it as:

"the fastest-growing AI tech conference in Europe, and perhaps in history" [16].

The Summit also prioritizes collaboration across industries, connecting capital, technology, and policy to deliver real returns on investment and launch pilot programs [15]. Highlights include the RAISE Startup Competition, which offers a prize pool exceeding €10 million [17], and the MACHINA Summit, dedicated to advancements in physical AI and robotics [14].

RAISE Summit Ticket Options

| Ticket Category | Price (Early Bird) | Belangrijkste kenmerken | Eligibility |

|---|---|---|---|

| PRO | €999 + VAT | Access to exhibition floor, stages, workshops, Startup Competition, networking app, and official ecosystem party | Open to all |

| VIP | €1,899 + VAT | Includes all PRO features plus VIP lounge access, MACHINA Summit entry, startup pitch decks, and industrial trend reports | Open to all |

| VIP MAX | €3,499 + VAT | All VIP features plus a Versailles AI Gala dinner with executive and VIP guests | Open to all |

| DEVELOPER | €599 + VAT | Includes all PRO features | Open to emerging developers (application required) |

| STARTUP | €599 + VAT | Includes all PRO features | Companies with <€4.5M funding, <4 years old |

| VOLUNTEER | FREE | Event access and behind-the-scenes experience | Limited to selected applicants |

Early bird rates are available until 17 April 2026 for PRO tickets and 16 April 2026 for VIP and VIP MAX tickets [14].

sbb-itb-e314c3b

Examples of AI Governance in Conference Agendas

Case Studies: Governance Sessions

Conference agendas are now packed with sessions that turn governance theories into practical tools. At the European AI & Cloud Summit in May 2026, Azar Koulibaly, General Manager and Associate General Counsel at Microsoft Europe, delivered a session titled "Navigating the EU AI Act." This presentation broke down how organisations can use Microsoft's compliance templates to adapt EU AI regulations into actionable, risk-based frameworks [19]. Mac Mani, an attendee, shared his takeaway:

"The summit stressed that compliance is an opportunity to innovate responsibly, build trust, and gain a competitive edge in the global market" [19].

In March 2026, the IAPP Global Summit featured a breakout session called "Guidelines to Guardrails: Governing Agentic AI in Practice." Led by Frédérique Horwood (Cohere), Ryan Levman (Bell), and Maneesha Mithal (Wilson Sonsini), the session explored how to safely manage data flows and architectures in complex RAG-enabled systems, helping organisations transition from proof-of-concept to production [18].

Another standout example comes from MLcon Amsterdam in April 2026. Lorenzo Satta Chiris from the University of Exeter presented "AURA: A Practical Risk Framework for Autonomous AI Agents." This session tackled the challenge of immature safety practices for agents, offering a structured governance model. David Roldán Martínez, Chief AI & API Officer at David Roldán, commented:

"Without strong AI governance, even the most impressive models can become liabilities" [20].

These examples highlight how conferences are focusing on practical governance frameworks to address real-world challenges.

Putting AI Ethics into Practice

Conferences are also diving into actionable strategies to integrate ethical principles into AI workflows. At the Responsible AI Conference in June 2026, a panel discussion titled "Ethical AI Usage" brought together Jennifer Cheung (Pandora), Marianne Holding (Santander), and Paul McKane (Deliveroo). The session focused on embedding ethical guidelines into everyday operations and uncovering unintended consequences through rigorous monitoring [21].

The European AI & Cloud Summit also showcased sessions on "red-teaming" with tools like PyRIT to stress-test AI systems for safety before deployment. Attendees were introduced to integrated dashboards, such as the Responsible AI Dashboard and Purview Compliance Manager, which automate risk assessments and maintain detailed audit trails [19]. These tools demonstrated that governance frameworks don't hinder innovation but instead create a foundation for it [19].

The emphasis on practical solutions is unmistakable. Conferences now champion toolkits like Fairlearn for identifying bias, InterpretML for enhancing explainability, and Azure AI Content Safety for filtering harmful content. Organisations adopting these methods report measurable benefits, including a 98% boost in stakeholder trust, a 75% drop in regulatory and operational risks, and threefold faster adoption of AI technologies [19].

How to Approach AI Governance at Conferences

Tips for Attendees

Preparation is the key to getting the most out of governance-focused sessions. Start by building a strong understanding of major regulatory frameworks like the EU AI Act, the NIST AI Risk Management Framework, and ISO/IEC 42001. This groundwork equips you to actively participate in discussions about compliance, rather than just listening from the sidelines.

Seek out workshops that offer practical training in areas like AI auditing, ethical risk assessment, and drafting legal documentation. Pay special attention to sessions that tackle challenges such as demonstrating ROI, navigating compliance hurdles, and adapting workforces to new governance demands.

Networking is another essential tool. Use these opportunities to connect with professionals who’ve successfully embedded AI governance into broader digital risk strategies. Dive into discussions about the "4 Ps" of governance: People (who owns what), Process (risk management workflows), Policy (clear, actionable guidelines), and Proof (evidence and documentation). As Kaiti Huang, Head of AI Governance Advisory at Swiss Cyber Institute, puts it:

"Mature organisations go beyond having an 'AI policy.' They operate with a governance framework that defines accountability, processes, and evidence" [23].

While attendees need to come prepared with knowledge and a networking mindset, organisers also have a critical role in shaping the conversation around governance.

Recommendations for Organizers

For organisers, the focus should be on delivering content that addresses the realities of the "execution era" of AI governance. This means covering topics like regulatory enforcement, litigation trends, and the operational challenges organisations face today. The RAISE Summit, held annually at Le Carrousel du Louvre in Paris, provides a strong example. Its "4F Compass" framework - Foundation, Frontier, Friction, Future - ensures governance is woven throughout the event's programme.

Consider creating dedicated tracks that tackle issues like compliance challenges, cyber resilience, and ROI in governance practices. Vendor demonstrations should clearly highlight whether systems are compliant or non-compliant, with any necessary legal disclaimers included. Multi-disciplinary panels are another must-have. Bringing together developers, legal experts, civil society representatives, and government officials can foster trust and provide a well-rounded perspective. Practical takeaways, such as established tools for AI assurance, add even more value for attendees.

What's Next for AI Governance in Conferences

AI governance is evolving rapidly, and this shift is being reflected in conference agendas. With roles like Chief AI Governance Officer now appearing in the C-suite [22], the focus is moving toward topics such as agentic AI infrastructure, assurance literacy, and sovereign AI strategies. The European Union’s €200 billion investment in AI highlights how governance has become a cornerstone of economic development [6].

Jakub Szarmach captured this transition when he said:

"The year 2025 marked the definitive end of the 'AI ethics debate era' and the beginning of the 'AI governance execution era.' Abstract principles collided with concrete legislation, litigation, and boardroom accountability" [22].

Looking ahead, conferences will likely emphasize evaluation frameworks for autonomous agents, international cooperation on shared governance standards, and workforce resilience through better assurance training. At the same time, ongoing litigation around issues like bias, intellectual property, and deepfakes is shaping governance practices in real time.

Veelgestelde vragen

How do I know if my AI is “high-risk” under the EU AI Act?

To figure out if your AI system falls under the "high-risk" category as defined by the EU AI Act, you’ll need to carefully examine its classification criteria. High-risk systems are those that can have a major impact on safety, fundamental rights, or legal compliance. Common examples include applications in biometric identification, credit scoring, or managing critical infrastructure.

Start by conducting a thorough risk assessment of your AI applications. Pay close attention to how your system aligns with transparency and accountability standards. If your AI handles sensitive data or makes decisions that are crucial to safety, it’s more likely to be considered high-risk under the regulation.

What evidence should I bring to prove AI compliance and accountability?

To showcase AI compliance and maintain accountability, it's crucial to provide detailed documentation that aligns with governance frameworks, risk classifications, and regulatory standards - such as the EU AI Act. This includes:

- Transparency records: Ensure clear documentation of how decisions are made and data is processed.

- Risk management protocols: Outline the steps taken to identify, assess, and mitigate risks.

- Monitoring and auditing processes: Maintain logs and reports that demonstrate ongoing oversight and adherence to standards.

Additionally, operational frameworks, controls, and risk assessments must be thoroughly documented. This means keeping comprehensive records covering legal, ethical, and technical compliance. Audit trails are particularly important - they serve as concrete evidence that accountability measures are in place and actively followed.

Which governance tools help with bias, transparency, and safety in production?

Managing bias, ensuring transparency, and prioritising safety in AI development requires a set of well-defined tools and practices. These include ethical AI frameworks, risk management protocols, and adherence to regulations such as the EU AI Act.

Key measures involve using bias detection algorithms to identify and address unfair patterns, conducting fairness assessments to evaluate equitable outcomes, and implementing rigorous testing to guarantee safety in real-world applications. Together, these tools encourage responsible AI development by fostering transparency, minimising bias, and maintaining ethical standards throughout the production process.