Execution-focused AI programming is transforming how businesses use AI by enabling systems to complete tasks autonomously, not just provide insights. This approach integrates seven key components - foundation models, retrieval mechanisms, guardrails, orchestration, observability, evaluation, and serving infrastructure - to deliver measurable outcomes.

Why does this matter? Because most AI projects fail to move beyond pilot phases. In 2025, 42% of companies abandoned AI initiatives, and 95% of deployments showed no profit impact. Transitioning to task-driven AI, however, is already delivering results. Examples include Danfoss cutting customer response times to near-instantaneous and Telus saving 40 minutes per interaction across 57,000 employees.

Key drivers of this shift:

- Mature orchestration platforms and governance frameworks.

- Fine-tuned, cost-efficient AI models (7–70 billion parameters).

- A growing demand for task-specific AI agents, with Gartner estimating 40% of enterprise apps will integrate them by late 2026.

The technology is evolving, with agentic systems using planning, tool use, memory, and feedback loops to execute tasks autonomously. For example, Macquarie Bank’s AI agents improved security alerts and guided customers through workflows, while SaaS companies reduced support costs by 98%.

Challenges remain, including deployment bottlenecks, fragmented data, and organizational resistance. But for those who overcome these hurdles, the rewards are substantial - improved efficiency, reduced costs, and better decision-making.

Execution-focused AI is not just about technology; it demands robust governance, hybrid infrastructures, and continuous validation to ensure reliability. Companies that integrate AI into daily workflows, invest in scalable systems, and prioritize clear accountability will lead this transformation.

Execution-Focused AI: Key Statistics and Business Impact 2025-2026

Orchestrator Agents & MCP: How AI Agents Drive Automation

sbb-itb-e314c3b

Core Features of Execution-Focused AI

Execution-focused AI takes a step beyond traditional chatbots by operating autonomously in continuous loops. These systems don’t just respond to prompts - they actively work toward defined outcomes using external tools like APIs and databases to achieve success criteria [6][5]. This marks a significant shift: while older AI models react to inputs, agentic systems aim for measurable results [6][11].

At the heart of this transformation are four key components working together: planning, which breaks down complex goals into smaller, actionable steps; tool use, allowing the AI to interact with external software to complete tasks; memory, which ensures the system retains both short-term context and long-term insights to learn from past actions; and feedback loops, which help verify outcomes and adapt strategies when things don’t go as planned [6]. It’s the interaction between these elements - planning, tool use, memory, and feedback loops - that drives the intelligence behind these systems [7].

Agentic AI Systems: How They Work

The backbone of agentic AI is the Observe-Think-Act cycle, also known as ReAct [6][5]. The system begins by observing its current state, then reasons through potential actions, executes the chosen action via a tool, and observes the outcome to guide its next move. This creates a self-correcting process. For more complex tasks involving 5–20 or more steps, many organizations adopt a Plan-and-Execute model. One AI drafts the entire plan, another executes it, and a third adapts the plan based on real-world feedback [5].

The results speak volumes. For example, in February 2026, Macquarie Bank introduced agentic systems that increased user redirection to self-service channels by 38% while reducing false-positive security alerts by 40% [4]. These systems didn’t just answer questions - they autonomously managed security incidents and guided customers through multi-step workflows. Gartner predicts that by 2028, 15% of daily work decisions will be made autonomously by AI agents, without human intervention [9].

To keep these agents efficient and reliable, production teams set boundaries. For instance, limiting each agent to 7–10 tools minimizes confusion and reduces the risk of "hallucinated" function calls [10]. Similarly, maximum step counts (usually capped at 25), token budgets, and time limits prevent infinite loops that could lead to excessive API costs [10]. Some advanced setups use tiered model routing, where simpler tasks are handled by fast, cost-effective models like GPT-4o-mini, while more complex reasoning is reserved for higher-end models. This approach has been shown to cut costs by 60–70% without compromising reliability [5].

To ensure these autonomous systems deliver consistent results, organizations rely on robust validation protocols.

Objective-Validation Protocols

Validating an agent’s performance goes beyond checking its final output. Trajectory evaluation reviews the entire sequence of actions, tool usage, and intermediate results rather than just the end goal [12][13]. When an agent claims it has completed a task, it must provide evidence - such as logs, screenshots, test results, or database updates - to prove its success [15]. This shift from trusting the AI’s claims to requiring verifiable proof has been pivotal for enterprise adoption, reinforcing the need for measurable outcomes.

Modern validation systems operate across multiple layers, as shown below:

| Validation Layer | Mechanism | Purpose |

|---|---|---|

| Input Validation | JSON Schema / Zod | Filters out malformed tool arguments before execution [12][5] |

| Action Budgets | Token/Cost/Step limits | Prevents infinite loops and runaway expenses [5][13] |

| Output Filtering | Content filters / PII checks | Ensures compliance and prevents sensitive data leaks [5] |

| Self-Reflection | Critique/Reflection loops | Enables the agent to learn and self-correct based on observed mistakes [6][14] |

For high-stakes tasks - like deleting data, processing payments, or sending external communications - systems enforce human-in-the-loop checkpoints. These require explicit human approval before the agent can proceed [12][5][13]. Currently, only 4% of teams allow agents to act fully autonomously, reflecting a cautious approach to granting full independence to these systems [8].

One global insurance company saw agent accuracy improve from 85% to 95% within two months by adopting continuous tuning and evidence-based validation [13]. The key to this success was treating validation as an ongoing process. By learning from every verified outcome, the system continuously refined its decision-making capabilities.

Tools for Execution-Focused AI Development

When it comes to tools designed for execution-focused AI development, three platforms stand out: Cursor, Claude Code, and GitHub Copilot. By the end of 2025, Cursor reached nearly €475 million in annual recurring revenue, while GitHub Copilot held a commanding 42% share of the paid AI coding tools market [18]. Each tool offers distinct features tailored to different aspects of the development process. Here's a closer look at their strengths and capabilities.

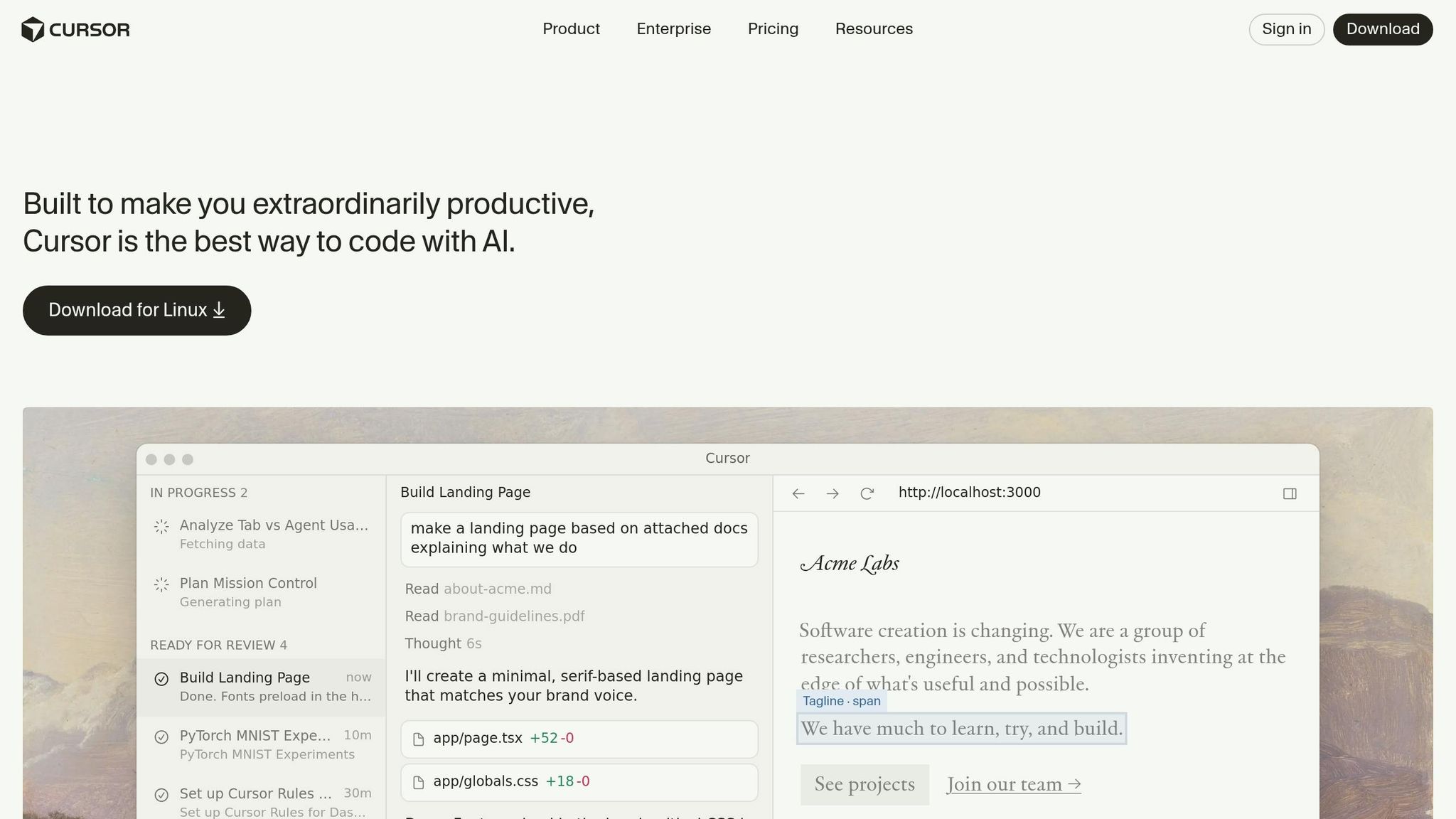

Cursor: IDE-Native Agent

Cursor reimagines the developer experience by embedding AI deeply into its VS Code fork. Unlike traditional plugins, Cursor treats AI as an integral part of the workflow. Its Composer mode allows seamless multi-file editing with real-time diff reviews, while the Agent mode can execute terminal commands, run tests, and even correct code errors automatically.

With a user base exceeding 360,000 paying customers and an impressive 4.9/5 rating, Cursor excels at day-to-day development tasks, particularly for developers who value immediate visual feedback. It also supports switching between models like Claude, GPT, or Gemini, depending on the complexity of the task. Pricing is tiered, starting at approximately €19/month for the Pro plan, €38/month for Business, and up to €190/month for the Ultra plan.

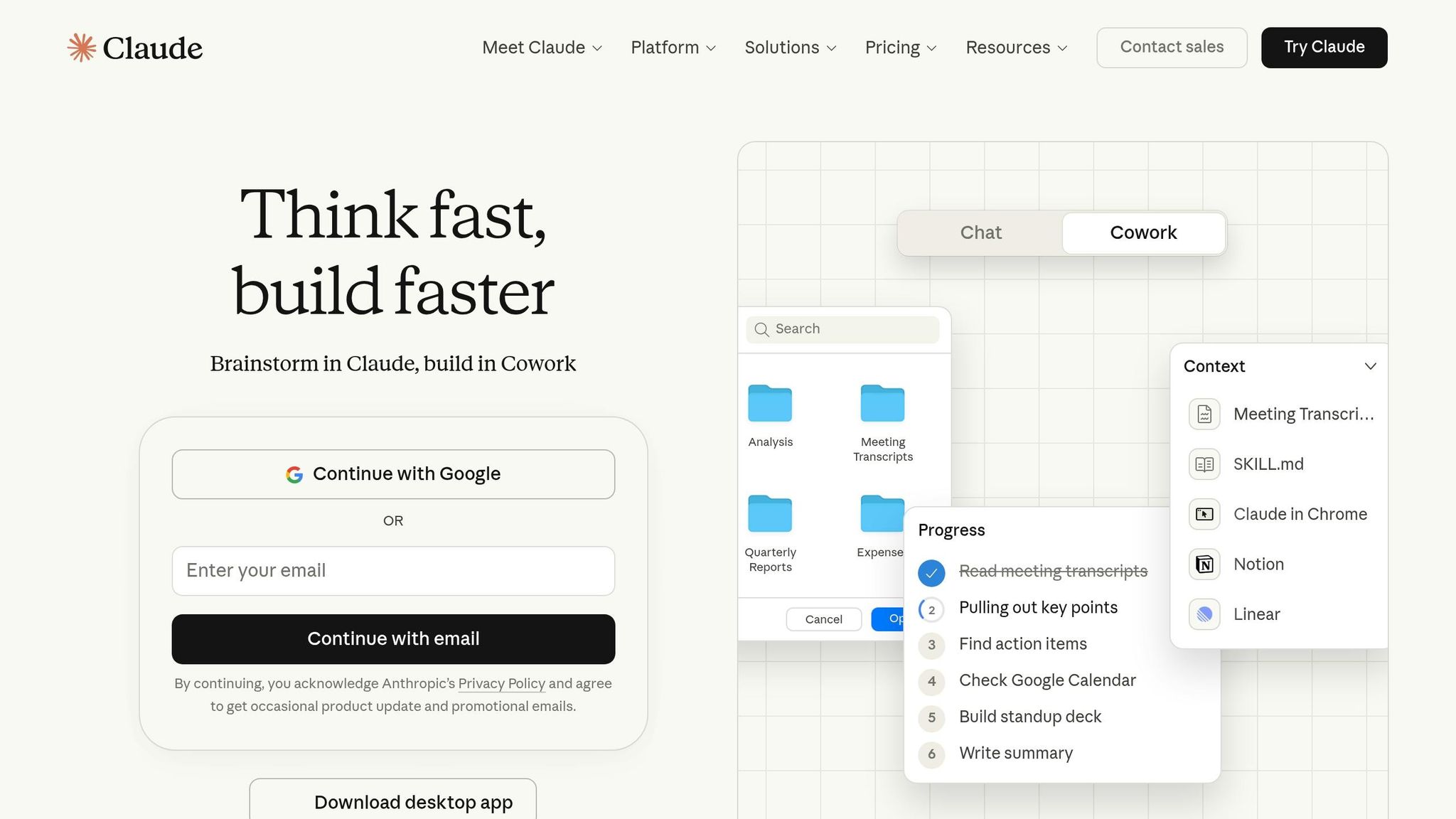

Claude Code and GitHub Copilot

Claude Code functions exclusively via terminal, acting as a command-line agent capable of exploring large codebases, running tests, and autonomously committing changes. It boasts a 75% success rate for modifying codebases with more than 50,000 lines and achieved benchmark success rates between 77% and 79% on SWE-bench Verified [18]. One of its standout features is a beta context window that can process up to 1 million tokens, making it ideal for handling entire monorepos. Additionally, it can persist session states using external files like "todo.md." Claude Code is available through the Claude Pro subscription at around €19/month or via a pay-per-use API model.

GitHub Copilot has evolved from an autocomplete tool into a more autonomous system with its "Copilot Coding Agent." This feature allows developers to assign GitHub issues directly to the AI, enabling it to create pull requests independently. The tool integrates seamlessly into GitHub workflows and includes enterprise-ready features like IP indemnity and audit logs. Developers report gaining up to 92% more time for high-impact work when using Copilot. Pricing starts at approximately €9.50/month for individuals, with enterprise plans reaching up to €37/month.

These tools represent a practical shift in AI development, offering solutions that cater to diverse workflows and priorities.

Tool Comparison

The table below highlights the key differences between these platforms to help developers choose the right tool for their needs.

| Feature | Cursor | Claude Code | GitHub Copilot |

|---|---|---|---|

| Primary Interface | IDE (VS Code Fork) | Terminal / CLI | IDE Extension / GitHub Web |

| Autonomy Level | High (Composer/Agent mode) | Very High (Full-cycle agent) | Moderate (Agent mode with PR integration) |

| Context Window | 70,000–120,000 effective | 200,000 to 1M (beta) | 64,000–128,000 |

| Strengths | Multi-file editing, diff review, model flexibility | Deep reasoning, test-driven refactoring | Ecosystem integration, IP indemnity, security features |

| Weaknesses | IDE lock-in, resource intensive | CLI-only, steeper learning curve | Limited context handling for very large codebases |

| Best For | Daily development with visual feedback | Large-scale refactors and greenfield projects | Enterprise environments with GitHub workflows |

A Tag-Team Approach

Senior engineers increasingly combine these tools to maximize productivity. Cursor is often used for maintaining a flow-state during coding, while Claude Code handles large-scale refactoring tasks. As Builder.io aptly described:

"Cursor gives you exactly what you asked for. Claude Code gives you what you asked for plus everything it thinks you'll need" [17].

Strategies for Implementing Execution-Focused AI

Rolling out execution-focused AI requires more than just cutting-edge algorithms - it demands clear accountability and a robust infrastructure to handle real-world complexities. Two key areas stand out: governance frameworks and hybrid computing architectures.

Governance and Accountability

The gap between AI adoption and operational oversight is stark. While 83% of organisations report using AI systems, only a small fraction - 13% - have visibility into how those systems function [24]. This disconnect raises significant risks for decision-making, compliance, and customer trust.

To address this, organisations are turning to structured governance frameworks. Tools like the NIST AI Risk Management Framework and ISO/IEC 42001 help establish clear accountability by assigning roles such as AI Risk Officers and Model Owners [19][22]. These frameworks ensure someone is always responsible for the system’s behaviour and outcomes.

Governance isn’t just theoretical - it needs to be embedded into everyday workflows. For example, automated checks in CI/CD pipelines can flag issues in AI-generated code before deployment [21]. In high-risk scenarios, human oversight remains critical. Mechanisms like human-in-the-loop (HITL) systems and "kill switches" allow organisations to intervene or halt autonomous operations when necessary [22][24].

One growing challenge is managing the surge of non-human identities in AI-powered operations. In some cases, non-human identities outnumber human ones by a staggering 82:1 [20]. New tools, such as the Model Context Protocol (MCP), are emerging to track and manage these autonomous agents effectively. Shalini Harkar, Lead AI Advocate at IBM, captures the importance of governance:

"Governance emerges as the bridge between technical advancements and organisational accountability" [22].

The stakes couldn’t be higher. Under the EU AI Act, non-compliance can lead to fines of up to €35 million or 7% of annual turnover [22]. On the flip side, organisations that prioritise AI security measures save an average of €1.76 million per data breach [20], and 64% of consumers report greater trust in companies with transparent AI practices [23].

But governance is only part of the equation. The infrastructure supporting these systems must also evolve.

Cloud-Plus-Edge Infrastructures

Relying solely on cloud-based AI deployments is becoming less practical. By early 2026, 23% of organisations had shifted their production AI workloads on-premises to better manage costs and performance, while only 8% remained fully cloud-based [20]. This shift reflects the growing need for lower latency, cost efficiency, and greater control.

Hybrid infrastructures offer a balanced approach. Tasks can be distributed across multiple tiers: lightweight processing happens on devices, more intensive neural tasks run on local micro-GPU clusters, and large-scale aggregation occurs on regional cloud nodes [25]. To make this work, organisations need to implement latency budgeting - breaking down processes into smaller components and assigning strict time budgets to each step to ensure smooth user experiences [25].

A real-world example of this approach comes from Google Cloud’s "Google AI at Google" initiative. In 2026, under COO Francis deSouza, Google enhanced its sales intelligence layer on Vertex AI, boosting lead-to-opportunity conversion rates by 14% in just six weeks. Their marketing campaign agent also saved 18,000 hours in 2025 by auto-generating multi-channel assets [27]. DeSouza underscored the importance of data in these efforts:

"There is no AI strategy without a data strategy. Your data must be unified, governed, and secure" [27].

For computing architecture, flexibility is key. Stateless agents with unpredictable traffic can benefit from serverless functions like AWS Lambda or Google Cloud Run. Meanwhile, stateful agents requiring stable environments are better suited to containerised deployments using platforms like Kubernetes [26]. This mix ensures scalability while maintaining control over sensitive operations.

Beyond software, infrastructure planning must account for physical demands. High-density AI workloads often require a shift from traditional air cooling to hybrid or liquid cooling systems. This change calls for closer collaboration between IT teams and facilities management to avoid costly retrofits [20]. These logistical details are critical for ensuring long-term efficiency and performance.

Applications and Implementation Challenges

Looking at the practical side of execution-focused AI programming, these real-world examples highlight how it's making a difference across various industries.

Industry Use Cases

Finance is leading the charge in adopting these technologies. Goldman Sachs, for instance, uses autonomous agents built on Anthropic's Claude to handle tasks like transaction reconciliation and client onboarding. These agents track transactions across systems, investigate discrepancies, and categorize issues for resolution [29][32]. Marco Argenti, the firm’s Chief Information Officer, described these agents as:

"Think of it as a digital co-worker for many of the professions within the firm that are scaled, are complex and very process intensive" [32].

Manufacturing is seeing tangible efficiency improvements. AGCO, a company specializing in agricultural equipment, adopted the Proceedix Connected Worker platform alongside Google Glass. This setup provides hands-free work instructions, cutting process time by 35% and reducing operator learning curves by 50%, saving an hour of wasted time per operator daily [28]. Peggy Gulick, AGCO’s Director of Digital Transformation, explained:

"All seven wastes of Lean manufacturing - Transport, Inventory, Motion, Waiting, Over-Processing, Overproduction, and Defects - have ultimately become metrics for AGCO as we continue to audit our success" [28].

Healthcare and Life Sciences are also leveraging AI in critical ways. Merck implemented Phaidra’s AI Virtual Plant Operator at its Pennsylvania facility, which spans 650,000 m², between April 2022 and 2023. This system autonomously manages cooling for vaccine production, cutting energy use by 16.2%, improving thermal stability by 70.5%, and reducing excess equipment runtime by 50.9% [30]. Similarly, AtlantiCare introduced clinical documentation agents in January 2026, achieving 80% adoption among providers and saving doctors about 66 minutes per day [37].

Customer Support has undergone a transformation. Salesforce reduced its support workforce by 66%, from 9,000 to around 3,000, by deploying AI agents to handle first-line inquiries and classify cases [29]. Meanwhile, Cisco showcased its AI capabilities at its Live Conference in Amsterdam in February 2026. These agents analyze telemetry data to detect performance issues and execute solutions faster than human operators can triage alerts [29].

Product Development teams are finding creative uses for AI. Pigment developed the "Customer Idea Agent" (CIA), a system of custom LLM agents that gathers and organizes feature requests from platforms like Slack, Zendesk, and Gong. This helps teams prioritize product roadmaps based on revenue potential [31].

These examples highlight how AI is reshaping industries, though its implementation is not without hurdles.

Common Implementation Challenges

Despite these advancements, moving from experimentation to full production remains a major hurdle. Only 10% of agentic AI pilots make it to live production, and Gartner estimates that over 40% of such projects will be abandoned by 2027 - not due to technical issues, but because of deployment challenges [29].

One key obstacle is the verification bottleneck. While AI has made generating code and content cheaper, manual quality assurance struggles to keep up with the volume, capping throughput. Even though 97% of developers use AI coding assistants, only 39% report measurable business results [35].

Process debt adds to the complexity. Integrating AI into outdated workflows often shifts bottlenecks downstream instead of solving them. Nicolas Teisseyre, Senior Partner at Roland Berger, put it this way:

"The AI experimentation era is over. Organisations that treat AI as a technology overlay are falling behind. Winners reimagine their entire operating model" [34].

Data fragmentation and quality are persistent issues. Fredrik Falk of Beam.ai summarized the challenge:

"The bottleneck is no longer 'can AI agents do the work.' It's 'can your organisation deploy them'" [29].

Organisational resistance is another significant barrier. Only 6% of U.S. companies have moved AI projects into production, with half citing a lack of skilled resources as the main issue [36]. Successful implementation often requires smaller, highly skilled teams where roles like Product Managers and Developers evolve to focus more on technical orchestration and verification [35]. Without proper training and change management, frontline employees may view AI as a threat rather than a tool for empowerment [36][37].

The stakes are high. Global spending on AI is expected to hit about €2.38 trillion in 2026 - a 44% year-over-year increase [29]. Yet, 95% of generative AI pilots fail to deliver meaningful business results, largely due to implementation and cultural challenges rather than technical limitations [33]. For those who overcome these barriers, the rewards are substantial, with manufacturing applications reporting returns of 200–400% within 12–18 months [37]. Addressing these challenges is crucial to unlocking the full potential of execution-focused AI.

Future Trends in Execution-Focused AI Programming

As AI continues to evolve, the next wave of advancements is heavily focused on improving hardware efficiency and ensuring reliable performance. A key development in this space is the rise of hardware-aware models, which are specifically tailored for GPUs, FPGAs, and domain-specific accelerators like TPUs and NPUs, rather than relying on traditional CPUs [38][39]. This shift is not just about performance - it's also addressing a critical challenge: the growing energy demand. By 2030, electricity consumption in data centres, with AI as a major contributor, is expected to exceed 945 terawatt-hours [40]. With the global AI market projected to hit nearly €3,3 trillion by 2033, efficiency and reliability are becoming essential priorities [40].

Hardware-Aware Models and Vericoding

One of the most exciting developments in this area is the creation of compact models that deliver exceptional performance while using far fewer parameters. For instance, Alibaba's Qwen3-Coder-Next employs a Mixture-of-Experts architecture, activating only 3 billion out of 80 billion parameters. This model achieves 74.2% on the SWE-Bench Verified benchmark at just 1/11th the cost - around €0.0077 per million tokens [16]. Such innovations not only cut costs but also significantly reduce energy usage, addressing the "intelligence per joule" challenge.

On the coding front, vericoding is transforming how bug-free, verifiable code is generated. What used to rely on manual review is now shifting toward automated enforcement. A notable example comes from February 2026, when Cody Lee, Datadog's Platform Engineering Director, used Claude Code to rewrite a 283-command CLI from Go to Rust. By employing a verification harness with schema-based snapshot testing, his team achieved 96.2% behavioural parity, with the AI autonomously resolving subtle authentication bugs [43]. As Lee aptly put it:

"The quality of AI-assisted development is bounded by the quality of its verification signals" [43].

Meanwhile, wafer-scale integration is driving latency to near-zero. The Cerebras WSE-3, boasting 4 trillion transistors and 900,000 AI cores, reduces data movement to a minimum, enabling near-instant AI responses [41]. Additionally, smaller, specialised models with 1.5 billion parameters are outperforming larger ones in tool-calling tasks by eliminating the "JSON tax" - the processing overhead involved in handling complex structured data [16].

Enterprise Security Improvements

Security frameworks are undergoing a major transformation, moving from reactive fixes to proactive designs. A 2026 global scan revealed 18,422 instances of the OpenClaw framework exposed to zero-click remote code execution due to weak sandboxing in Model Context Protocol connectors [16]. This highlighted the growing need for hardware-based Trusted Execution Environments and isolated agent execution, which are now becoming the norm in enterprise AI deployments [40].

Another significant shift is the adoption of trace-based auditing for compliance. In AI systems, runtime traces capture decision-making processes that static source code cannot, providing a critical layer of documentation for regulatory reviews [42]. Harrison Chase, the founder of LangChain, explained this evolution succinctly:

"In software, the code documents the app; in AI, the traces do" [42].

The role of autonomous agents is also expanding rapidly. By early 2026, between 16% and 23% of all code contributions involved autonomous agents [42]. To ensure these agents operate reliably, organisations are implementing deterministic patterns like "Observe → Act → Verify → Replan", which prevent recursive loops and validate outputs against expected states [16].

As the market for autonomous AI agents is set to grow from €8.1 billion in 2025 to €247 billion by 2035 [40], companies that prioritise strong verification and security measures will be best positioned to succeed. This is especially true in Europe, where the push for sovereign AI - keeping infrastructure and data within national borders - is accelerating. Sovereign clouds, driven by regulatory requirements, are becoming essential for achieving scalable, secure, and autonomous AI systems [40].

Conclusion: Moving Forward with Execution-Focused AI

The move toward execution-focused AI isn't just about adopting the latest technology - it's about transforming pilot projects into measurable business outcomes. Many pilot failures highlight a disconnect between experimental AI and achieving operational success. The issue isn't a lack of enthusiasm or funding; instead, it's that organisations often treat AI as a side project rather than a critical part of their operations.

To address this, companies need to rethink their workflows entirely. When AI enters the equation, how work is done must evolve. This means shifting from basic tools like chatbots that answer questions to operational AI systems that actively create, manage, and sustain long-term capabilities [3]. David Clarke, Global Managing Director at Grant Thornton, sums it up well:

"Execution today isn't about doing more, it's about closing the loop. In 2026, the gap won't be between companies that use AI and those that don't. It'll be between companies that embed it into daily operations and those that keep talking about transformation" [44].

Building trust is another critical step. As Lin Qiao, CEO of Fireworks AI, and Ali Ansari, CEO of micro1, point out:

"The bottleneck is not interest, talent, or budget. It is trust. And trust is built through reliable inference and evaluations grounded in expert human knowledge" [2].

To gain this trust, organisations should focus on trace-level observability and implement strict guardrails to ensure AI outputs align with real-world performance. Starting with a few high-impact use cases, improving data governance, and setting clear KPIs can lay the groundwork for scaling AI effectively [45]. These are some of the actionable strategies discussed at the RAISE Summit.

For businesses ready to move beyond the pilot stage, the RAISE Summit provides a wealth of practical insights. With over 9,000 attendees, 350+ speakers, and sessions dedicated to execution-focused AI, the event dives into key topics like production architecture, hybrid verification methods, and governance frameworks. These are the tools that separate teams that merely demo AI from those that deliver it [1].

Success in this new AI era will belong to organisations that invest in strong data infrastructures, redesign workflows around AI, and adopt disciplined engineering practices. The shift to execution-focused AI is already happening - will your organisation lead the way or fall behind?

FAQs

What makes execution-focused AI different from a chatbot?

Execution-focused AI takes things a step further than chatbots, which are mainly built to reply to user prompts with text-based answers. These advanced systems don’t just respond - they act. They handle complex tasks, automate workflows, and make decisions using a reasoning engine that enables them to plan, execute, observe, and refine their actions until they achieve specific goals.

What sets them apart from traditional chatbots is their dynamic functionality. Instead of being static, execution-focused AI integrates seamlessly with various tools, manages workflows, and delivers results that are both scalable and measurable. This makes them a game-changer for businesses looking to streamline operations and achieve tangible outcomes.

How do you prove an AI agent really completed a task?

To confirm that an AI agent has successfully completed a task, its outputs need to match defined criteria and align with the intended objectives. This involves using validation frameworks, conducting ongoing testing, and implementing performance monitoring.

Additionally, observability and governance play crucial roles in maintaining reliable processes. Measurable outcomes, such as ROI or other success metrics, provide tangible evidence of results. These methods are especially useful for tracking actions and verifying task completion in intricate workflows or real-world applications.

What’s the fastest way to move an AI pilot into production?

The quickest path to getting an AI pilot into production is by following a structured plan that includes clear governance, operational readiness, and a phased rollout. A 90-day framework that integrates MLOps practices and governance standards (like NIST AI RMF) helps ensure the process is safe, scalable, and repeatable. Begin with shadow mode testing to confirm the AI's value, then move into governed autonomy for production. Along the way, standardise KPIs and set guardrails to make scaling as seamless as possible.